The real value of Dify lies in how easily you can build, deploy, and scale an idea no matter how complex. It’s built for fast prototyping, smooth iteration, and reliable deployment at any level. Let’s start by learning reliable LLM integration into your applications. In this guide, you’ll build a simple chatbot that classifies the user’s question, respond directly using the LLM, and enhance the response with a country-specific fun fact.Documentation Index

Fetch the complete documentation index at: https://docs.dify.ai/llms.txt

Use this file to discover all available pages before exploring further.

Step 1: Create a New Workflow (2 min)

- Go to Studio > Workflow > Create from Blank > Orchestrate > New Chatflow > Create

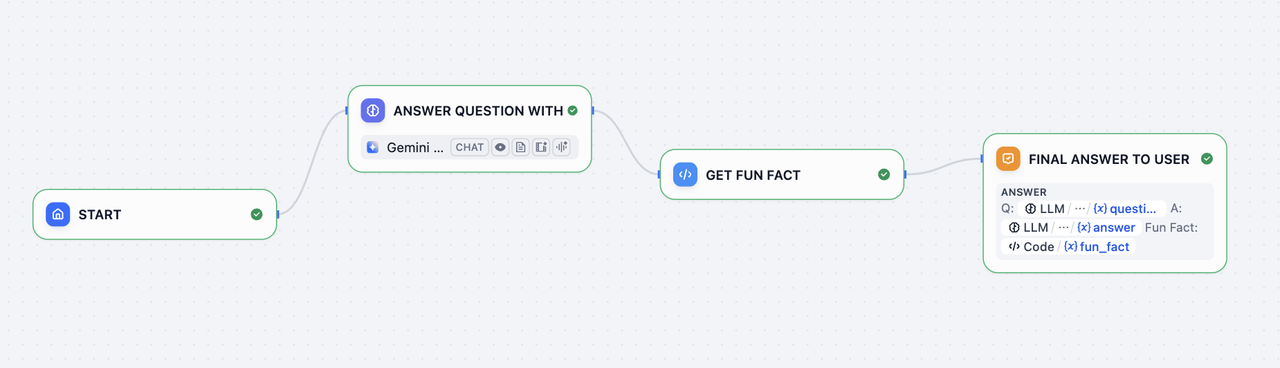

Step 2: Add Workflow Nodes (6 min)

1. LLM Node and Output: Understand and Answer the Question

LLM node sends a prompt to a language model to generate a response based on user input. It abstracts away the complexity of API calls, rate limits, and infrastructure, so you can just focus on designing logic.Enable Structured Output

Enable Structured Output allows you to easily control what the LLM will return and ensure consistent, machine-readable outputs for downstream use in precise data extraction or conditional logic.

- Toggle Output Variables Structured ON >

Configureand clickImport from JSON - Paste:

2. Code Block: Get Fun Fact

Code node executes custom logic using code. It lets you inject code exactly where needed—within a visual workflow—saving you from wiring up an entire backend.Configure Input Variable

Change one

Input Variable name to “country” and set the variable to structured_output > country3. Answer Node: Final Answer to User

Answer Node creates a clean final output to return.

End Workflow:

Step 3: Test the Bot (3 min)

ClickPreview, then ask:

- “What is the capital of France?”

- “Tell me about Japanese cuisine”

- “Describe the culture in Italy”

- Any other questions